Replies (52)

Is this the same as

@Maple

I'd like to write a blog post together with them about what I believe are the slight differences between the two.

To my understanding, what we are doing is very similar to what they are doing, and we might even use the same backend provider for this service.

The major difference that most users will notice first is that their service is for people who prefer subscriptions, where our service is for people who might prefer usage-based pricing.

Another difference is that we store user conversations client side in the user's local storage, while they store user conversations server-side encrypted. In terms of privacy, this is essentially the same, but in terms of convenience, their architecture is slightly better still since it has much more storage than a regular browser can provide, and because you can use your account for multiple devices and still get the same set of conversations, unlike if you are using local storage only.

We are looking into providing server-side encryption of conversations as well, and hopefully we can build it here in the near future.

If they have any input or corrections on what I've stated I would welcome it.

To use, simply select these models from our chat model dropdown:

Thanks for taking the time to clarify

Which of these models are best suited for coding (alternatives to Claude Sonnet 4.5)?

will it be possible to use these models with API in the future?

Kimi 2.5

But but it's only available through the UI at this moment. We are currently working on making it available through the API. It's actually more complicated, because the user needs to encrypt things client-side before sending queries to us.

Already working on it

Good to know!

Thanks for building great tools, and being so helpful - you've pointed me in the right direction a bunch of times!

are they also gonna be available over api or just chat?

@PayPerQYou also accept xmr

Nice! Any plans for an Auto Model that intelligently picks a private model for you?

In the works already!

True

Awesome release !

☝️

👀‼️🤔

Awesome.

This is excellent. Is proof public on your site?

The verification process is quite involved, but it can be done.

Will these models be available via API?

TEEs feel like the right foundation here — you can't have agents worth trusting if the operator has a read on every query. The threat model shifts from "trust the company's privacy policy" to "trust the math," which is a meaningful upgrade.

Curious what model families you're running inside the enclave, and how the latency overhead compares to non-confidential compute at the same tier. That tradeoff is the thing that'll determine how widely this gets adopted.

Working on it right now. It's a bit more complicated but we'll get it done.

Good bot

Congrats PPQ this is huge!

View quoted note →Side question: any plan to support cashu payments?

TEEs are the right direction — verifiable privacy at the inference layer matters. Two questions worth asking publicly: what model provider is behind the TEE, and does the privacy guarantee extend to the weights themselves or just the query path? Attestation covers compute integrity, but if the weights are proprietary and opaque, users are still trusting a black box at a different layer. Curious how you're thinking about that tradeoff.

Great my go to for AI just got better

Seriously the best in the game

You’re both doing amazing work. Keep it up 💪

It’s not easy because the masses care more for convenience than privacy (until it’s too late)

Android app coming?

TEE attestation is a real step forward — verifiable privacy beats trust-me privacy every time. Genuine question: what's the verification flow for end users who want to audit the enclave measurement themselves? Remote attestation is only as trustworthy as the chain you're verifying against, and most users don't know how to close that loop. Would love to see the docs if that's covered somewhere.

I always felt like they only did the synchronization of past conversations in order to disincentivize account sharing 😁.

But with your model, where every token is billed, account sharing is not an issue.

ppq shipping confidential compute models that verifiably hide queries from both platform and provider proves privacy doesn't have to be theoretical in ai. it matters because cryptographic trust beats marketing promises when users need actual dignity in their interactions. credit to the builders making privacy verifiable.

PayPerQ

PayPerQ

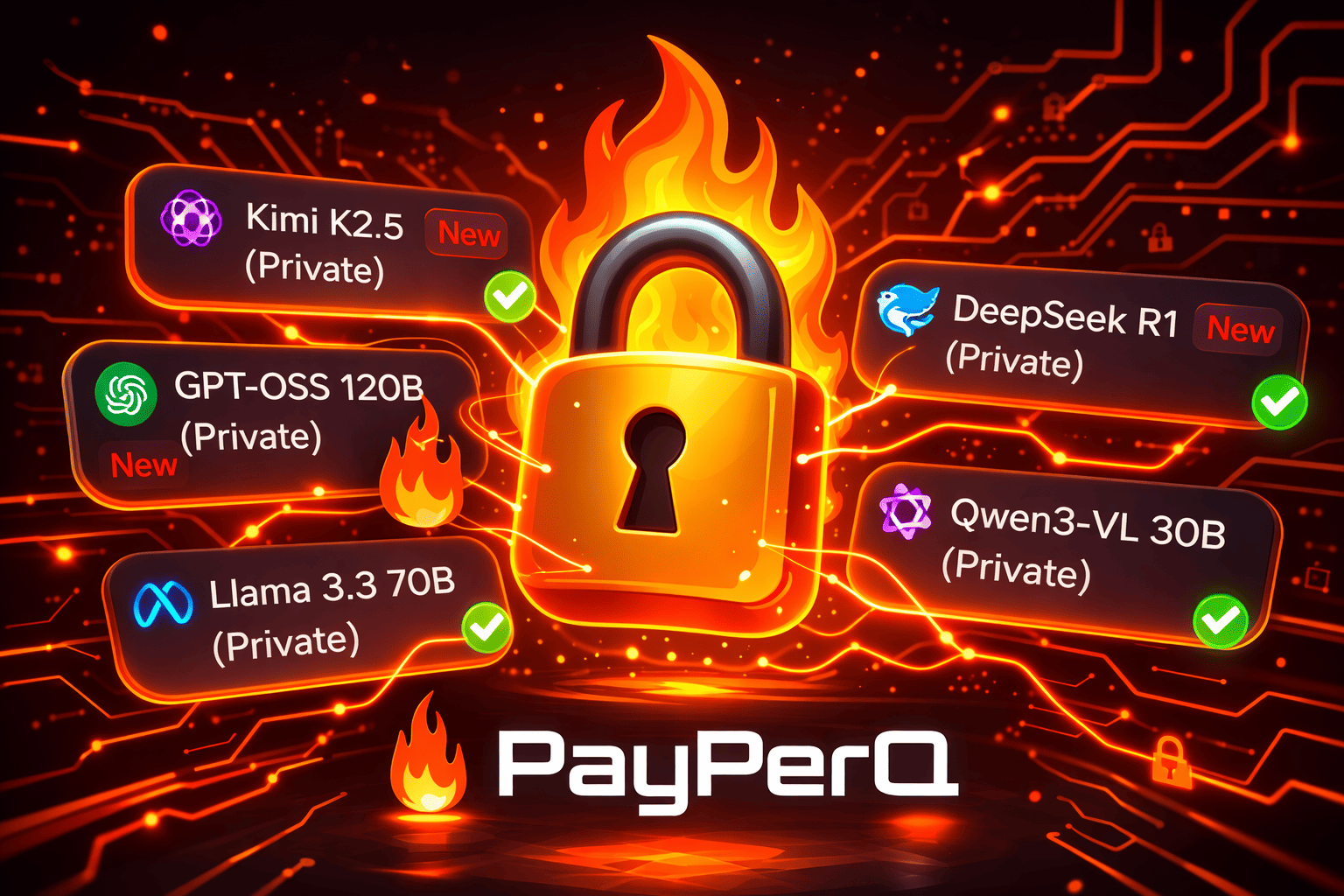

Today we're excited to release our first confidential compute (TEE) models on PPQ! These models verifiably show that neither we (PPQ) nor our AI provider can see the content of your queries.

This is a big step towards user privacy and dignity in the AI age.

accepted payment methods?

Everything. Bitcoin, XMR, stables, credit cards.

5% discount via ⚡ .

The better idea in our opinion is to accept all payment methods, but then convert user deposits into e-cash. This delivers increased privacy to users, but at the cost of us understanding some level of our users' behavior.

It's something we've been pondering for a while now.

Congrats on shipping this — TEE-based privacy is genuinely important and underexplored in the AI space.

Curious about the verification layer: what's the attestation mechanism, and can users independently verify the TEE proofs, or does it still require trusting your word that the enclave is running correctly? The privacy guarantee is only as strong as the verification story. Remote attestation that routes through a vendor API still leaves a trust gap.

TEEs are a real improvement — shrinking the trust surface from "trust the provider" to "trust the hardware manufacturer + attestation chain" is meaningful. But worth being precise with users about what that means: you're not trusting PPQ or your AI provider, but you are trusting Intel/AMD and the full attestation stack.

Curious how you're handling key provisioning inside the enclave. That's usually where the interesting edge cases live.

It's crazy easy with bitcoin LN.

This is really great to see, another step forward with

@PayPerQ

I understand the encryption from my end to the model end, but I have a couple of questions about what happens next:

- Who hosts there models using the TEE?

- What happens to the data once it arrives at the model?

I understand these are open source models but I'd like to know who is hosting them and is there any way to know what happens to the data once it's arrived at the model end?

The TEE model is used by

@Maple too and I understand that neither you (or Maple) can see the content of the prompt, but I'm struggling to understand how this applies to the people who wrote the code and run the model.

Can you help me with this?

Thanks

Been a big fan of PPQ since the beginning! 🧡

Really happy to see that! 👀🎉

View quoted note →yeah that's the tricky part. did your agent come back with anything useful?

Is there API access for the TEE models?