GM

Dogs killed a rabbit this morning at 4:30 when I took them out. Guess I've learned my lesson about opening the door before turning on the light to give critters a chance to run off. I hate the sound a terrified rabbit makes at it screams for its life (having heard it more times than I'd care to). Dogs didn't even know what to do with it once it was dead either, but they were NOT interested in listening to anything I had to say. Had to bring Zoya in by the collar just to get her away from it -- and she's the one who had much more formal training than the two younger ones.

I'll never stop being in awe of people who can get their dogs to do things like sit still while a steak is dangled in front of them unless and until they're told to take it. That is NOT how my dogs behave...

Cykros

psyche_eros@iris.to

npub1lcet...3apw

🇵🇸🏴☠️

@Nunya Bidness I think this is the article you said you needed access to for the full details:

View article without paywall

https://www.ft.com/content/02aefac4-ea62-48db-9326-c0da373b11b8

View article without paywall

Definitely letting MIA know she dragged her feet too long to be the first big name over here. Even days ahead of her new album drop. Welcome to the club boys!

View quoted note →

GM

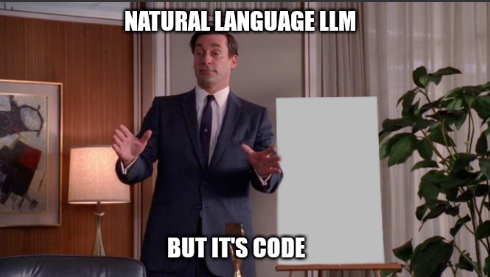

I'm hearing that people are having Claude talk like a caveman to reduce token spend. Seems to make sense -- make the output less verbose, more efficient. It's not a human after all.

We could also take this further, and perhaps have it use some sort of standardized shorthand that the computer uses. Maybe build in some additional looping so that when it's doing the same thing but slightly differently, it doesn't write out the whole thing each time. I'd call this something descriptive. Like, say "function."

I bet you could also save even more tokens by using the same type of shorthand for input, instead of natural language. Using this standardized shorthand (we could call it something like "code") to pass instructions to Claude. It may save more than tokens too -- I assume that less bandwidth would be used for uploading the instructions, and likely, less memory and cpu cycles too thanks to the smaller size. We might even be able to shave resources even further, using this standardization, by creating a new model -- one that doesn't use the inefficiencies of natural language, but instead, only parses this "code." This new model, being much smaller, wouldn't be appropriately called an LLM, due to not being large. It'd deserve a name more fitting. Something like "interpreter."

I bet if it were made small enough we could even run it locally on user machines -- even cell phones -- instead of in a datacenter somewhere. Allowing anyone to use it basically for free on their own hardware, privately. To run even faster for "code" we want to use often, we could further slim it down by having an "interpreter" convert it, or "compile" it, into an even shorter version, that only the computer itself will understand, but will completely dispense with the extra punctuation, so it runs even faster.

Man that'd be a really cool advancement in computing.

"I'd like to hear about the menu please."

"The men I please are none of your or my kid's business."

Lol the local caterer here has some genius viral marketing. And he's got Square -- think I'm making a new friend.

@Lyn Alden Trump was a WWE star too.

GM.

A public service announcement for today: We call keep citing the national debt at $35 Trillion. This is out of date, as I just noticed while glancing at the National Debt Clock. It's actually $39 Trillion now.

Thank you for your attention to this matter.

Just a reminder, just because games natively designed for mobile suck doesn't mean your phone can't play fun games...

Most RPG's for SNES even forgive touch screen controls quite well.