If I did everything correctly, when you open this website, you should be able to run any AI language model locally in your browser, any device.

It will utilize your native hardware with new WebGPU API to run these models.

This is just a demo; I am working on a proper interface for NostrNet.work users.

Demo: https://ai.nostrnet.work

Login to reply

Replies (17)

running locally mined AI on a pc as native app is resource intensive already. i think this will be even more so

Yes, it entirely runs on your hardware. On upcoming NostrNet PWA, you should even be able to turn off your internet, and it will still work. There are numerous options, including 1 billion models that can also run on your phone. Click on ""model name" on top centre, it will show you the list of all the models.

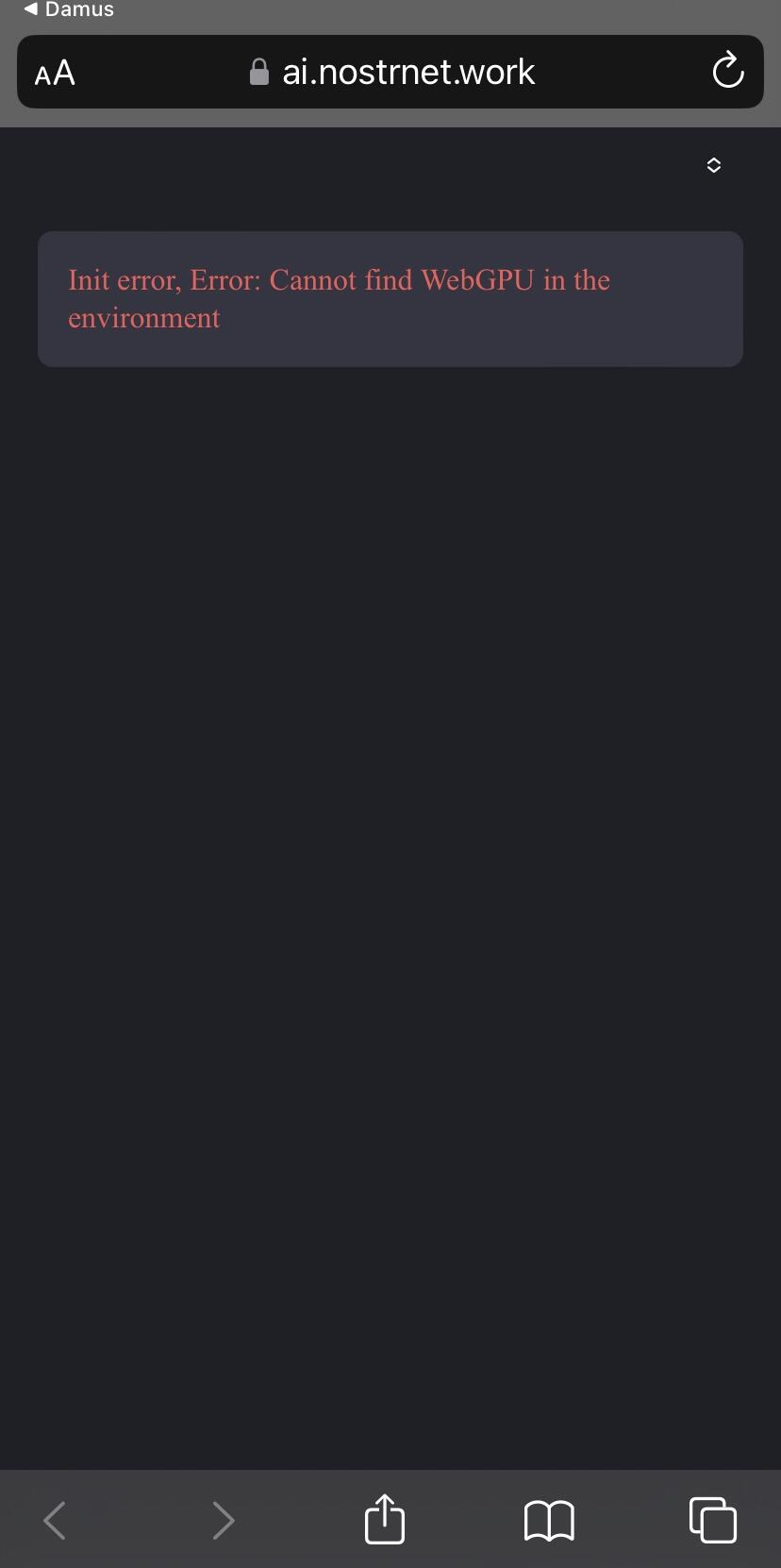

Error on iOS/Safari

thanks. on my mobile device it defaulted to one model. still, it lags quite a bit. haha

Safari/iOS WebKit does not support the WebGPU API yet; it works on every other device. You can try it on a PC, Android, Mac

Additionally, Apple is planning to enable WebGPU for Safari, so you will have it very soon.

Safari/iOS WebKit does not support the WebGPU API yet; it works on every other device. You can try it on a PC, Android, Mac

Additionally, Apple is planning to enable WebGPU for Safari, so you will have it very soon.

Sweet! I’ll try it out on my laptop this evening 🤙

Running Graphene OS on Pixel 7a...

I get that same message on my Android...

Chrome?

Vanadium, in my case...

Getting stuck in Pixel 6 stock using chrome/brave at: [System Initalize] Finish loading on WebGPU - arm

Any chance in future to use the tensor TPU for quicker responses?

Kiwi Browser. But I just tried Chrome and got the same message again.

I encountered the same issue on Kiwi, but it works fine on Chrome. Have you attempted to switch models?

The primary problem with this app is that the webGPU API it is using is not supported by all browsers. However, it is expected to be supported by all major browsers very soon.

I think Google is building Gemini model for it. If they make it open source, we will see multiple variants of it.

I'm not sure what you mean by switch models? I just tried to open nostrnet.work on Chrome and got the message that I needed to install it as a Progressive Web App. So I did that and then tried to open ai.nostrnet.work from the PWA and still get that same message.